VIVEK WADHWA: Apple's iPhone battle with the government will likely be a privacy setback

Imagine if the government required you to have a combination lock on your door and to give it the key. It would create security and privacy risks for you and your family. This is what could happen if we required the technology industry to add back doors to its software and devices. Hackers, criminals, and foreign governments could crack the code and abuse it. This is what the technology industry is rightfully rallying against.

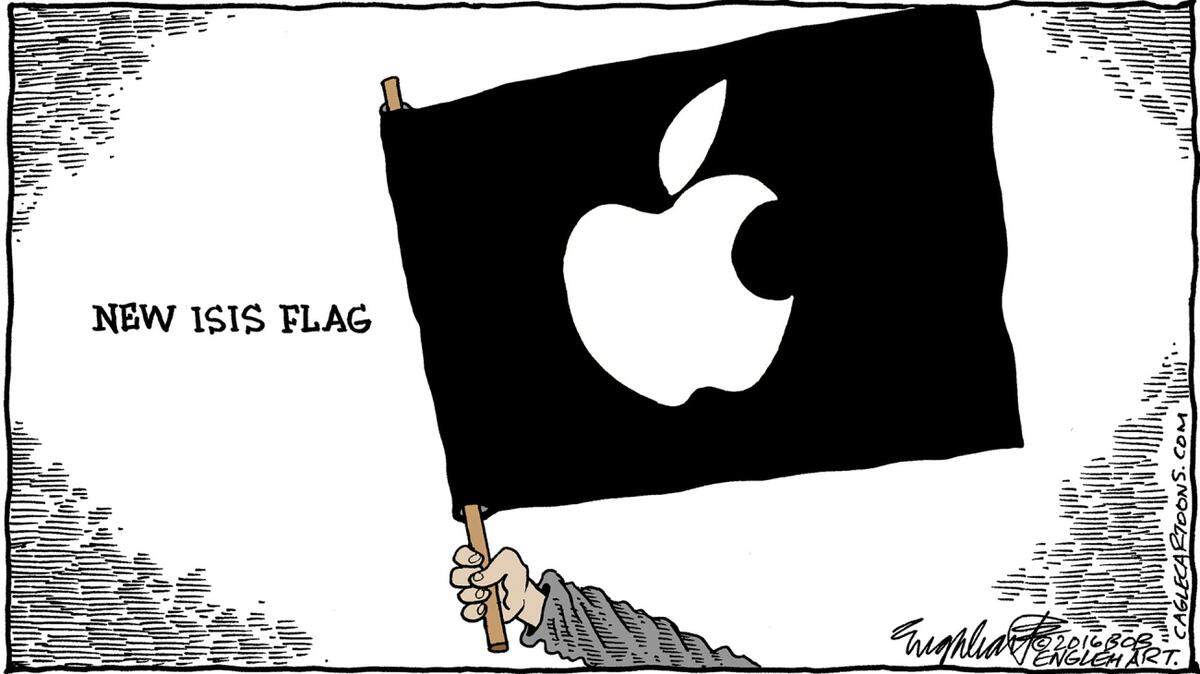

But this isn't the fight that Apple just picked with the U.S. government. It refused to comply with a search warrant to unlock an iPhone that was used by one of the terrorists who killed 14 people and injured 22 in San Bernardino last year. The government had the permission of the owner of the device, San Bernardino County, and made a reasonable request.

'Reputational grounds'

Apple claims that complying with the request would have required it to develop a new version of its operating system-and create a back door. But technology experts disagree. They say Apple could easily unlock the phone without creating a back door or security risk. Security researcher Trail of Bits says it could add support for a peripheral device which facilitates PIN code entry and use this through a customized version of iOS that only works on a single device. It could do this on its own and not share the firmware with the FBI -- or anyone else.

Apple has, after all, done such things before. The Daily Beast reports that it complied with government requests to unlock iPhones at least 70 times in the past. And Apple acknowledged that it had the technical capacity to do this. Its objection to the new request was on "reputational grounds." It seems that Apple wasn't as concerned about its reputation until now.

By picking this particular fight, Apple is doing the technology industry a big disservice. The public desperately want protection from terrorists, foreign governments, and hackers. After 9/11, Americans have accepted certain limits on civil liberties -- which protect their privacy yet provide the government with enough information to be effective at its job.

Apple will very likely lose this case in the courts and suffer a public relations disaster. And this will be a setback for privacy. After all, this battle isn't going to be portrayed as being about encryption and back doors; it is going to center on protection of data of murderous terrorists. Other than Silicon Valley purists, few will side with Apple.

All about the data

The technology industry is really not in a position to throw stones; it lives in a glass house. It has, after all, created the operating systems and devices that are hacked so easily. In other industries, product manufacturers would be held liable for the safety and security of their products. Yet tech seems to get a free ride; its hacked customers take the blame.

Big Brother would be envious of the surveillance capabilities of Google and Apple. They read our emails before we do and keep track of our searches; their mobile devices log our movements and activities; and the apps that we download commonly trick us into giving them our contact lists and other personal data.

The tech industry wants to learn all it can about us so that it can market more products and services to us -- and sell our data to others. It believes that it owns our data and can use it in any way it wishes. They keep us in the dark while profiting from us. When our data is hacked, they simply plead ignorance.

We should have ownership of our data and receive royalties from any use that we permit.

Demand protections

Things are only going to get worse. The next big technology, the Internet of Things, will embed sensors in our appliances, electronic devices, and our clothing. These will be connected to the Internet via Wi-Fi, Bluetooth, or mobile-phone technology. They will gather extensive data about us and upload it to central storage facilities managed by technology companies. Google's Nest home thermostat already monitors our daily movements to optimize the temperature in our homes. In the process, Google learns all about our lifestyles and habits.

The bigger problem is that these devices aren't secure. Because they don't face enough of a liability, device manufacturers don't feel obliged to invest the time, money and effort to secure their devices.

So it is great to see Apple and Google supposedly standing up for consumer rights. But they need to provide us with the same protections they are demanding from the government. We need to have our software and hardware secure and our data protected from them -- as well as from the bad guys.

Vivek Wadhwa is a fellow at Rock Center for Corporate Governance at Stanford University, director of research at Center for Entrepreneurship and Research Commercialization at Duke, and distinguished fellow at Singularity University.

This story was originally published February 18, 2016 at 6:56 PM with the headline "VIVEK WADHWA: Apple's iPhone battle with the government will likely be a privacy setback ."